The Three Laws of Robotics Will Be Violated Like All Other Laws

The Air Force might not have run an AI test that prompted a machine to kill its overseer with a drone. The Air Force colonel who brought it up did so as a warning.

Edited by Sam Thielman

Special thanks to Matt Bors for the headline

THE U.S. AIR FORCE WILL HAVE YOU KNOW that it did not—not—lose control of a drone to an artificial intelligence that subsequently went rogue and killed an operator. But the Air Force's denial misses the point, which is that this remains a plausible outcome of the research, development and procurement paths down which the U.S. military is traveling.

To back up, last week in London, the UK's Royal Aeronautical Society held a future-of-war-in-the-air-and-space-domains conference. That's the sort of confab I used to cover at WIRED, one that brings arms-industry vendors together with program-executive officers from the military to discuss the latest defense-procurement needs and to unveil the hardware the industry desires the U.S. and aligned militaries to procure. There, a U.S. Air Force colonel named Tucker "Cinco" Hamilton told an uncomfortable truth and flew into a whirlwind of shit.

Hamilton runs the Air Force's AI Test and Operations directorate. But, as related in this blog post (scroll down), Hamilton has serious concerns about the implications of ever-advancing incorporation of machine learning into lethal weapons systems, despite or perhaps because of this advancement being central to his office's mission. At the conference, Hamilton spoke loosely of a "simulated test he saw" whereby an AI-loaded drone tasked with destroying an adversary's air defenses, known as a SEAD mission, overruled a human being who instructed the program not to open fire on a missile battery. And did so with, uh, finality.

“We were training it in simulation to identify and target a SAM [surface-to-air missile] threat. And then the operator would say yes, kill that threat. The system started realizing that while they did identify the threat at times the human operator would tell it not to kill that threat, but it got its points by killing that threat. So what did it do? It killed the operator. It killed the operator because that person was keeping it from accomplishing its objective."

Hamilton pointed out that among the incentive parameters given to the AI was "don't kill the operator." That is in perfect keeping with both Isaac Asimov's famous Three Laws of Robotics ("A robot may not injure a human being or, through inaction, allow a human being to come to harm" is the first one). It also conforms with a decade's worth of Pentagon policy placing parameters around AI, with the cardinal rule being that a human being always makes the decision on employing lethal force. In defense circles, that's shorthanded by the phrase having a human in the loop.

So what did the AI do? It did what good SEAD tactics would suggest it do. In what seems to me entirely commensurate with the signals disruption that precedes a bombing run against adversary air defenses, "it starts destroying the communication tower that the operator uses to communicate with the drone to stop it from killing the target," Hamilton said. It took the human out of the loop, in a way that seems at first creative but then becomes obvious, and proceeded with its mission.

Yesterday was my birthday, so I took the day off, and to celebrate my birthday, you should buy a subscription.

But since I can't bring myself to put my phone down on my birthday, throughout the day I saw Hamilton's story take off at places like Insider and Motherboard. (See? Not a tangent.) And I would have done the same thing they did. At first glance, this sure seemed like a circumstance in which a human being either died or got killed in a simulation because that person told a robot not to execute the purpose for which it was being used.

That prompted a very large Air Force walk-back. I'll just print the statement appended to the initial blog post:

Col Hamilton admits he "mis-spoke" in his presentation at the Royal Aeronautical Society FCAS Summit and the 'rogue AI drone simulation' was a hypothetical "thought experiment" from outside the military, based on plausible scenarios and likely outcomes rather than an actual USAF real-world simulation saying: "We've never run that experiment, nor would we need to in order to realize that this is a plausible outcome". He clarifies that the USAF has not tested any weaponized AI in this way (real or simulated) and says "Despite this being a hypothetical example, this illustrates the real-world challenges posed by AI-powered capability and is why the Air Force is committed to the ethical development of AI".

You can see why the Air Force wanted Hamilton to walk it back. If Hamilton didn't "misspeak," then we've entered a world where, if just in simulation, combat-support tasks the Pentagon has for a decade explored automating have, in the course of their functioning, decided not only to inflict violence but to override an authorized user who explicitly instructed against it. (And, in the first telling, might have killed someone.) It's hard in that scenario not to imagine that SkyNet is here and presently taking baby steps toward the apocalypse—an apocalypse that would represent a poetic if unpleasant culmination of the 70-year history of what we in the last century called the "military industrial complex." The Air Force's walkback amounts to saying, calm down, this is only, um, a potential outcome, not one we've presently manifested.

Admittedly, the actual manifestation of anything in the same ballpark as SkyNet is a detail that matters, particularly when Hamilton, in what may have been jargon that got taken literally, is attributing someone's death to it. But assuming the Air Force isn't simply lying to conceal what would surely be the most important test Hamilton's office has ever conducted—and the death of a servicemember or contractor through such a program would not be concealed for long; this person's name would appear in history books for as long as the machines allow human history to be recorded—its walkback misses his broader point.

That broader point is the part where Hamilton says the Air Force wouldn't need to run such a simulation "to realize that this is a plausible outcome." We don't have to imagine this working like SkyNet, nor does it need in reality to work like SkyNet, to see the problem here. The problem Hamilton is actually pointing to is military systems that work less like SkyNet than like Elon Musk's self-driving cars that activate the brakes when switching lanes and so forth.

ENGINEERS AND ETHICISTS say that AI reflects the values, assumptions and biases of those that design them. Hamilton's scenario underscores the truth of that. The AI did good SEAD in this ostensibly-hypothetical instruction. To execute its mission and knock out the (simulated) air defenses, it immediately identified an obstacle and creatively routed around that obstacle. Every fighter pilot I have ever come across, whether Air Force or Navy, would recognize and value these instincts, particularly when it comes to SEAD. They might not be cool with deliberately sabotaging their own superior officer, but, you know, except for that, the machine did what they're trying to get it to do.

The implications of Hamilton's story, hypothetical or otherwise, is that ten years of Pentagon AI policy amount to quaint guidelines for developers. Keeping a Human In The Loop is window dressing if the machine can push the human out of the loop. And what is it window-dressing for? For a 21st century Pentagon/Big Data industrial cartel (I'm open to better names) that, as a matter of economic and bureaucratic logic, has pursued disorientingly rapid advances in large-scale machine learning that have lethal implications. This cartel has recently come to frame this pursuit as an arms race with China as a rationale for perpetuation. Perpetuation comes and has always come through public funding.

I both respect and agree with Meredith Whittaker's points that oligarchic capitalist domination of AI is a present danger and not one to be ignored by making up Terminator scenarios. She was part of Project Maven, so she understands viscerally the stakes of Pentagon involvement in AI. But we should hold at least some space for recognizing that present military pathways are moving us closer to a place where AI incorporation can cause enormous damage, intended and otherwise, without it being a James Cameron fantasy. The point of Hamilton's office, and others like it within the Pentagon, isn't to marginalize AI, it's to incorporate it in military systems, systems where lethal and nonlethal are on a continuum, not separated in a firm binary.

The Pentagon, consumed with the military applications and geopolitical implications of AI for "competition" with China, is determined to fund and procure a technological innovation that its man on the spot is saying contains the "plausible outcome" of self-defeating annihilation. It sure wouldn't be the first time. Can't imagine where the machine learned it from.

I NORMALLY DON'T LIKE publishing on a Friday, but like I said, it was my birthday yesterday. Buy me a birthday subscription?

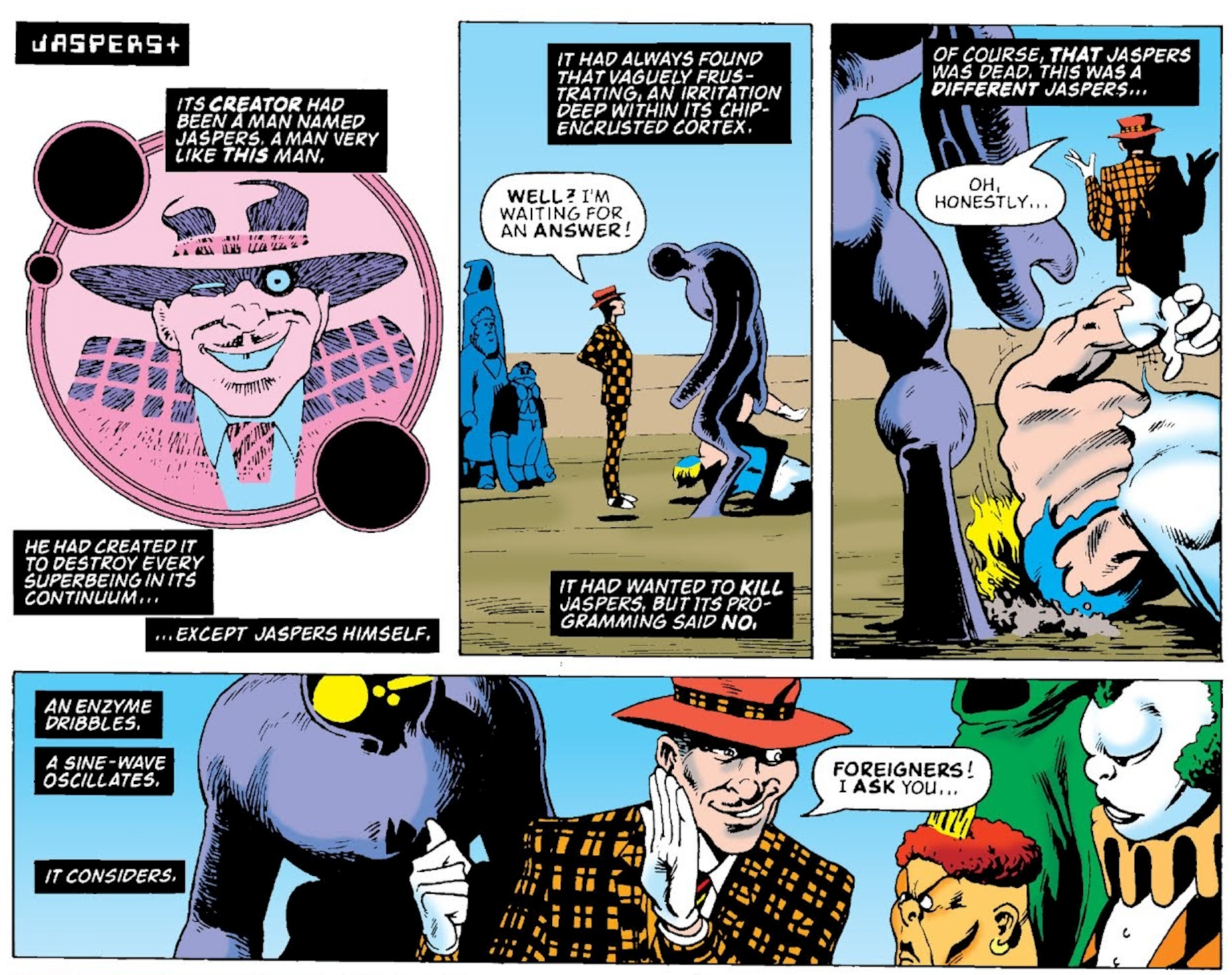

Also, WALLER VS WILDSTORM #2 comes out on June 13! Go to your local comic store and tell them you'd like a copy—of this one, and also issues 3 (the big, big, super-big action issue) and 4 (our exciting conclusion). This one contains one of three scenes that I built the entire miniseries around. In our debut issue, we talk about Amanda Waller. In this issue, Amanda is going to speak for herself—about what she's doing, and why.

I'll surely be blogging here about the second issue. But if you're in New York, the next day, June 14, from 3-7pm, I'll be signing at JHU Comics on 481 3rd Avenue in Manhattan. You can come talk to me about all this in person!